The Promise vs. Reality of AI Code Assistants for Product Integrations

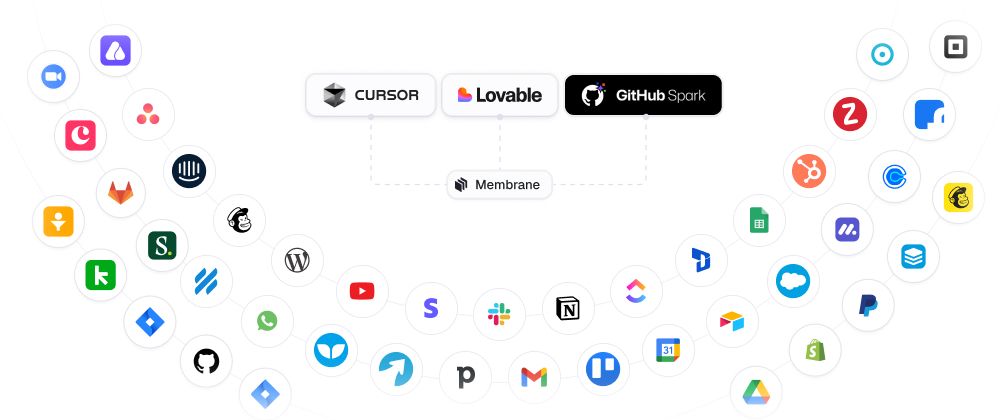

AI code assistants like GitHub Copilot, Cursor, Cody, Codeium, and Lovable have transformed the developer experience. With a few prompts, developers can scaffold code, generate boilerplate, and even spin up simple apps or integrations in hours instead of days.

The hype is justified: building integrations used to mean wading through API docs, dealing with OAuth flows, and debugging endless API errors. Now, an assistant can generate the initial connector with a single request.

But there’s a gap.

Scaffolding ≠ Scaling.

AI assistants excel at creating the first draft of an integration. They struggle however to make integrations production-ready: do the exact thing you want them to do reliably and provide sufficient details when something doesn’t work.

This post explores that gap. We’ll examine:

- The landscape of AI coding assistants in 2025.

- Why integrations are uniquely challenging.

- Where assistants hit scaling and maintenance limits.

- How Membrane bridges the gap by turning generated code into production-grade, maintainable integrations.

- How to connect your IDE or coding agent directly into Membrane using the new CLI workflow.

The Current AI Coding Assistant Landscape

In 2025, developers can choose from a rich ecosystem:

- GitHub Copilot (now with Spark): tightly integrated with GitHub repos, excels at suggesting boilerplate code and patterns directly in VSCode.

- Cursor: an IDE purpose-built for AI-pair programming, context-aware of your entire repo.

- Cody (Sourcegraph): optimized for large-codebase navigation, retrieval, and context.

- Lovable: a “vibe coding” tool that can generate entire apps from prompts.

- Windsurf: strong at auto-completion across larger scopes, with GPT-5 integration.

- Codeium: free, fast, and increasingly adopted by open-source developers.

Each of these tools can scaffold integrations: writing OAuth flows, generating API calls, and wiring up SDKs.

For example, developers have shared posts on communities like Reddit, Dev.to like:

Or

These tools are powerful starting points. But once you go beyond a single prototype integration, cracks appear.

Why Integrations Are Harder Than They Look

An integration isn’t just an API call. A production-ready integration requires:

- Authentication management: OAuth2, API keys, token refresh, multi-tenant support.

- Schema exploration and mapping: ensuring fields in one system map correctly to another, even as APIs change.

- Many applications support custom data objects and fields - your code needs to handle them correctly.

- Error handling: retries, rate limiting, exponential backoff, partial failure recovery.

- Testing & monitoring: logging requests, surfacing failures, alerting customers.

- Upgrades & maintenance: APIs deprecate, authentication methods change, customers request new fields.

In isolation, any coding agent can scaffold one of these steps. But across 20+ integrations? The main issue will be production-readiness, you won’t be able to maintain consistency and reliability. Coding agents need context, which can be very problematic across a complex system.

Where AI Code Assistants Hit Their Limits

Here’s what we see in the wild:

1. Inconsistent scaffolding

Different assistants generate different coding patterns. One developer we saw noted:

“Kiro, DeepSeek, Jules — they all kept changing their code-style decisions. I always had to take over the keyboard.”

This creates integration sprawl: each connector looks and behaves differently.

2. Brittle authentication flows

A common Reddit complaint:

Assistants scaffold the happy path of OAuth, but rarely handle refresh tokens, scope changes, or tenant-specific config.

3. Edge-case blindness

Agents happily generate fetch() calls but don’t account for rate limits, pagination quirks, or throttling rules. These bugs appear only in production, and they erode trust quickly.

4. Maintenance drag

One engineer migrating from Lovable to Cursor put it bluntly:

Each integration becomes a snowflake — difficult to update at scale.

Each integration becomes a snowflake — difficult to update at scale.

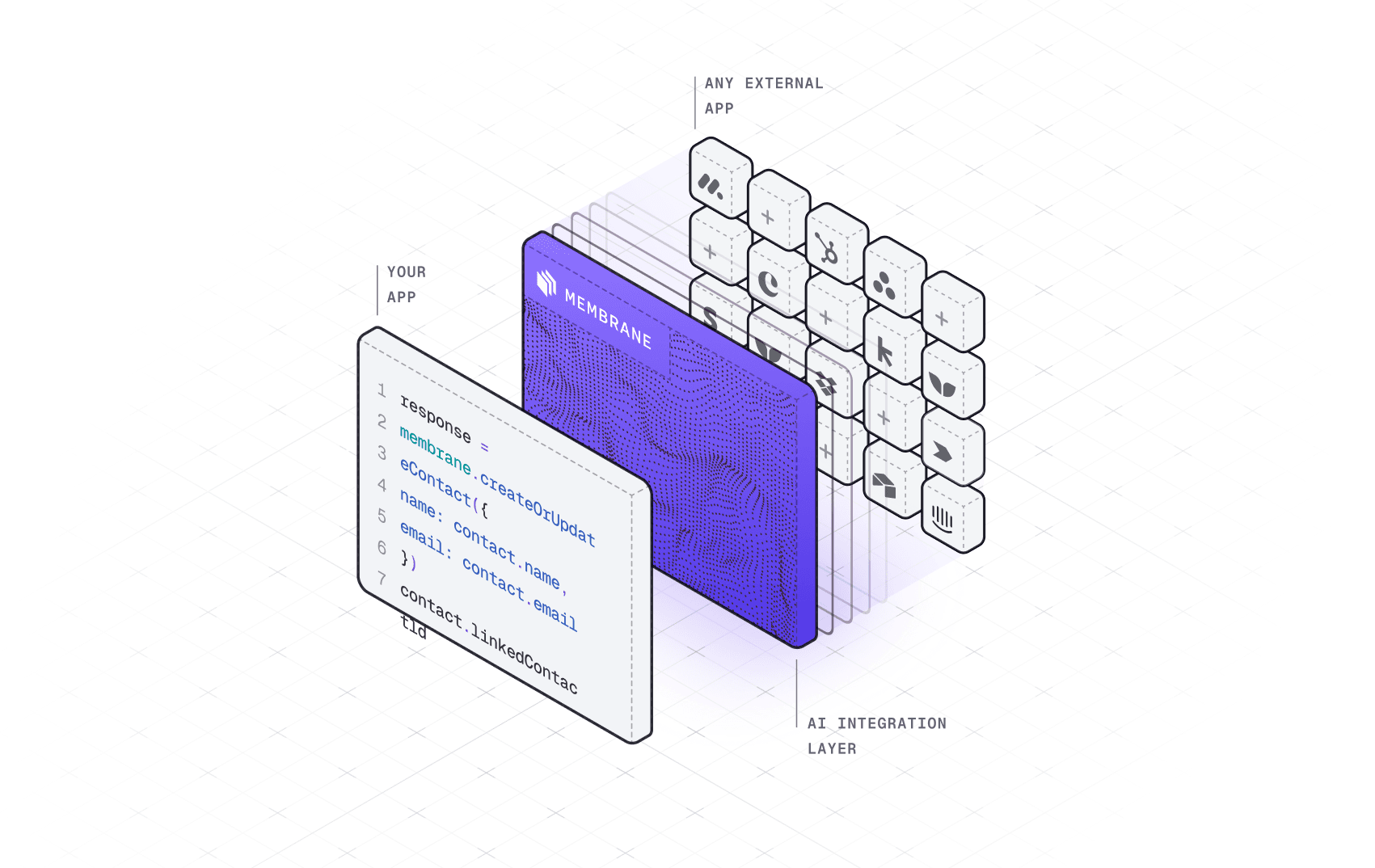

Membrane as the Missing Layer

This is where Membrane comes in.

Membrane is a universal integration layer designed for AI-built integrations. Think of it as the operating system for integrations generated with AI:

- AI-native: provides structured context that assistants can consume.

- Universal: supports any SaaS API.

- Scalable: built for dozens or hundreds of integrations, not just one.

How Membrane Bridges the Gap

- Consistent Connector Framework. Instead of each agent scaffolding a connector differently, Membrane provides a consistent runtime and schema for integrations. Agents generate code into Membrane's framework, ensuring maintainability across your entire integration ecosystem.

- Authentication handled once. OAuth flows, refresh tokens, and multi-tenant secrets are abstracted by Membrane. This means your AI assistants don't need to reinvent authentication; they simply plug into Membrane's established auth layer.

- Production-grade runtime. Membrane orchestrates retries, pagination, and error handling. This shields your AI-generated code from the common fragilities that appear in production environments.

- Observability baked in. Every integration running in Membrane has logs, metrics, and monitoring. You won't need to add monitoring as an afterthought - it's already built into the system.

- Scale without chaos. Whether you build 5 or 50 integrations, they all run inside the same Membrane runtime — reducing drift and making upgrades easy.

Connect Your AI Agent / IDE to Membrane

Here’s a quick step by step guide as to how you can use your existing coding agents to try to work with Membrane

1. Sign up to Membrane to generate your credentials

Your workspacekey will be auto-generated. You can check it in your Settings.

2. Install Membrane CLI

npm i -g @membranehq/cli

3. Initialize Membrane CLI

Navigate to the root of your project, copy paste your workspacekey and workspacesecretand do the following:

membrane init --key <workspaceKey> --secret <workspaceSecret>

or add the following lines to your membrane.config.yml file:

workspaceKey: <workspaceKey>

workspaceSecret: <workspaceSecret>

4. Launch agent in the root of your product repository

membrane

5. Connect your AI Agent

Press a inside Membrane CLI to connect your AI agent or IDE.

6. Check your status in the Membrane Console

Login into your Membrane account and go to → Connecting your development environments and AI coding agents section of the Dashboard to see the status

Conclusion: Scaffolding Meets Scale

AI code assistants are the new scaffolding — powerful, fast, and transformative. But scaffolding without a foundation collapses under load.

Membrane is that foundation.

By combining the creative speed of agents with the robustness of Membrane’s integration layer, teams can finally go from “It works on my machine” to “It works for every customer, every time.”

For developers, it means fewer late-night OAuth debugging sessions.

For integration engineers, it means consistency across dozens of connectors.

For product leaders, it means faster feature velocity with lower risk.

And for the ecosystem? It means the AI coding revolution finally reaches production-grade integrations.