How we use AI

Harnessing the power of AI for building integrations while avoiding hallucinations and omissions.

Generative AI can save 99% of time on building integrations, but only if put in a robust framework. You cannot (yet) ask LLM to build 100 integrations for you and expect a good result, but you can ask it to perform a small task - like extracting an API request details from documentation - and get correct result almost every time.

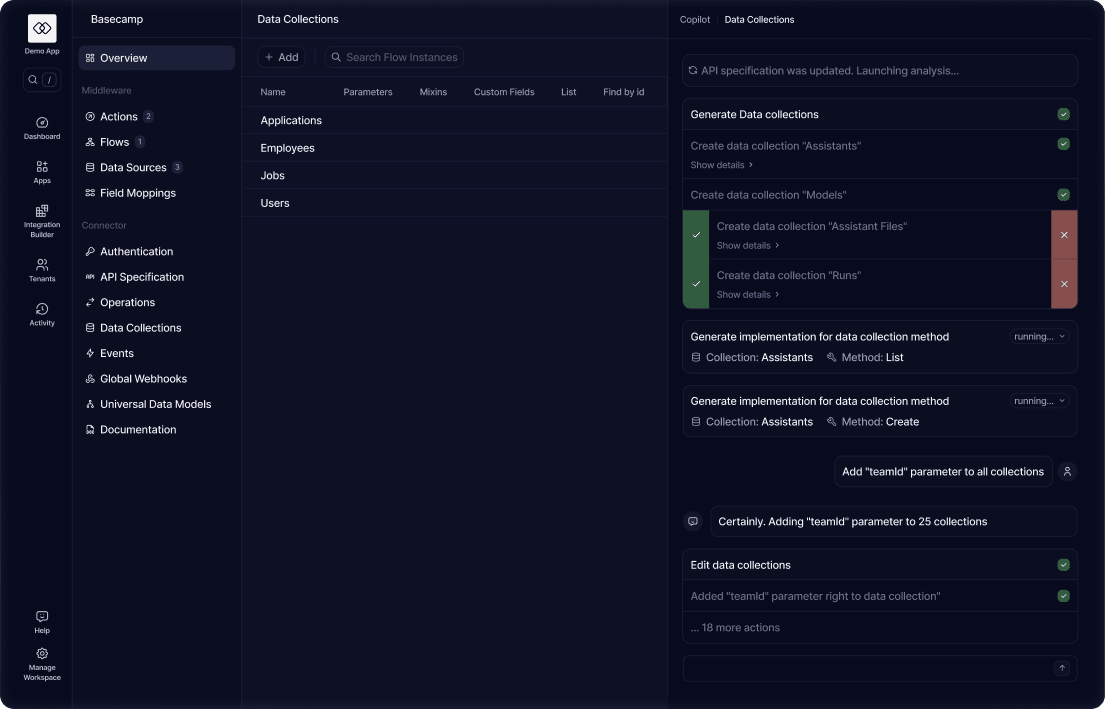

Generate and Test Universal Connectors

We feed API documentation and specifications into LLMs and ask it thousands of very specific questions. We validate the results and combine them into a Universal Connector that contains the all the details about integrating with a given app in a consistent format that can be used to build integrations automatically.

We also preserve the raw information: documentation pages and API specs - to provide additional unstructured context when necessary.

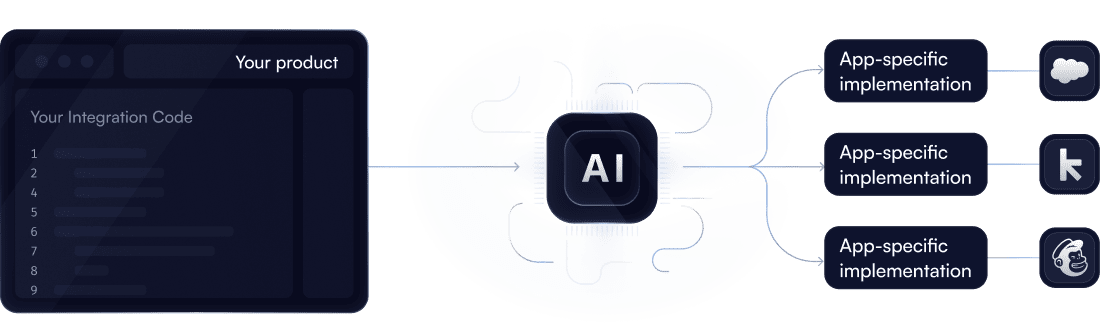

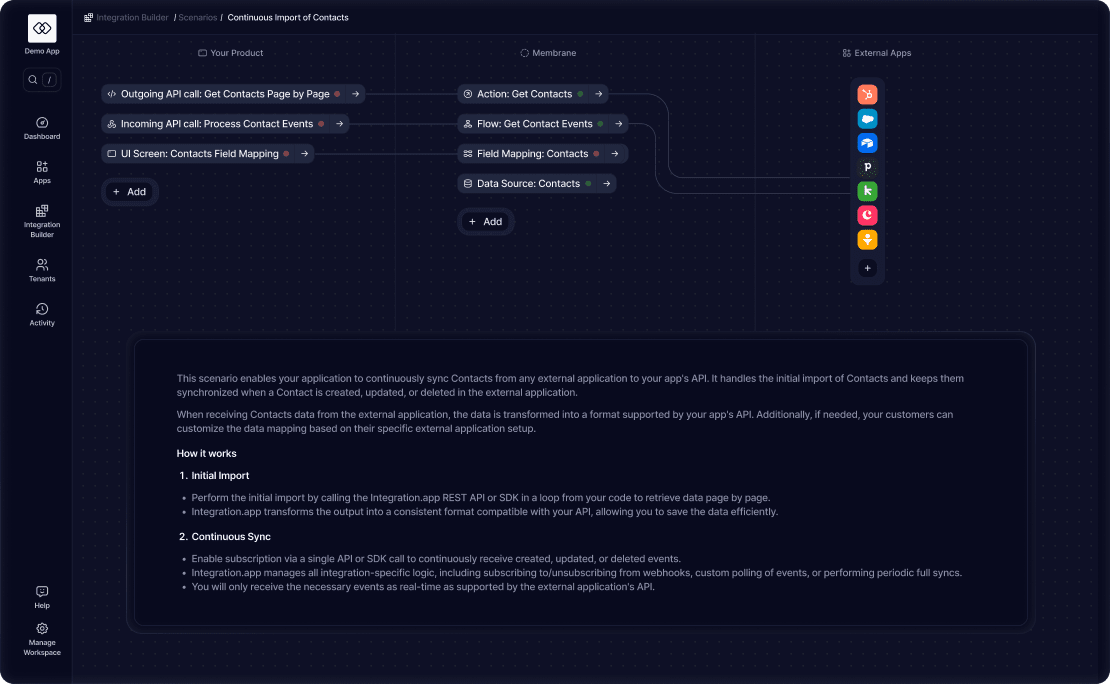

Connect Your Code to Universal Integration Blocks

To increase the precision of AI, our platform maps your integration use case to well-defined building blocks that represent every type of integration primitive: actions, flows, events, field mappings, etc.

Each building block is then connected to integration points inside your application.

This provides you with a consistent way to interact with external apps while giving AI enough details to build high-quality app-specific and customer-specific implementations.

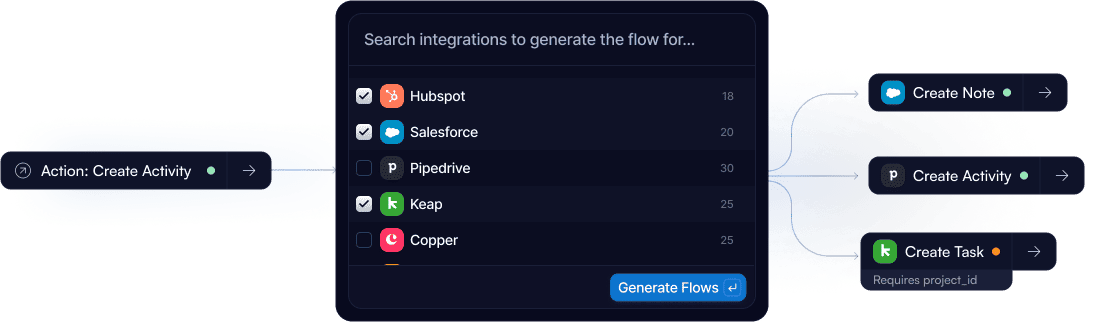

Generate App-specific Implementations of Universal Blocks

Then we map each building block in your use case to each application using information from the connector - both structured and unstructured. If connector has enough information to implement a building block according to your specification, it is generated automatically.

In some cases, connectors do not have enough information or cannot make a decision automatically because it is too ambiguous. Our platform will let you know when it happens and let you (or our solutions team) fill the gaps.

Hundreds of high-quality deep native integrations

The process above is the only practical way to integrate with hundreds or thousands of apps for any non-trivial integration use case.

The more integrations are built with us, the smarter our AI becomes when building the next one.